[1 of 6] Comparison between Bitmap and Video Codec (Citrix)

Today I want to start with the first blog of the remoting protocol series.

Before we start with our VDI or RDSH project we should think about the customer use cases they want to cover with the vGPU solution. In the past, I’ve seen a lot of projects, where the customer claimed to have issues since using a GPU enabled desktop. But in most situations it is not the GPU but the remoting protocol that is not configured or optimized for the given use case.

Therefore we need to understand the difference between Bitmap and Video codecs in the first step to better understand what kind of policy set should be used for the customers use case.

We will use the Citrix stack for this series of blog posts but if I find some more time, I will do the same for VMWare Horizon.

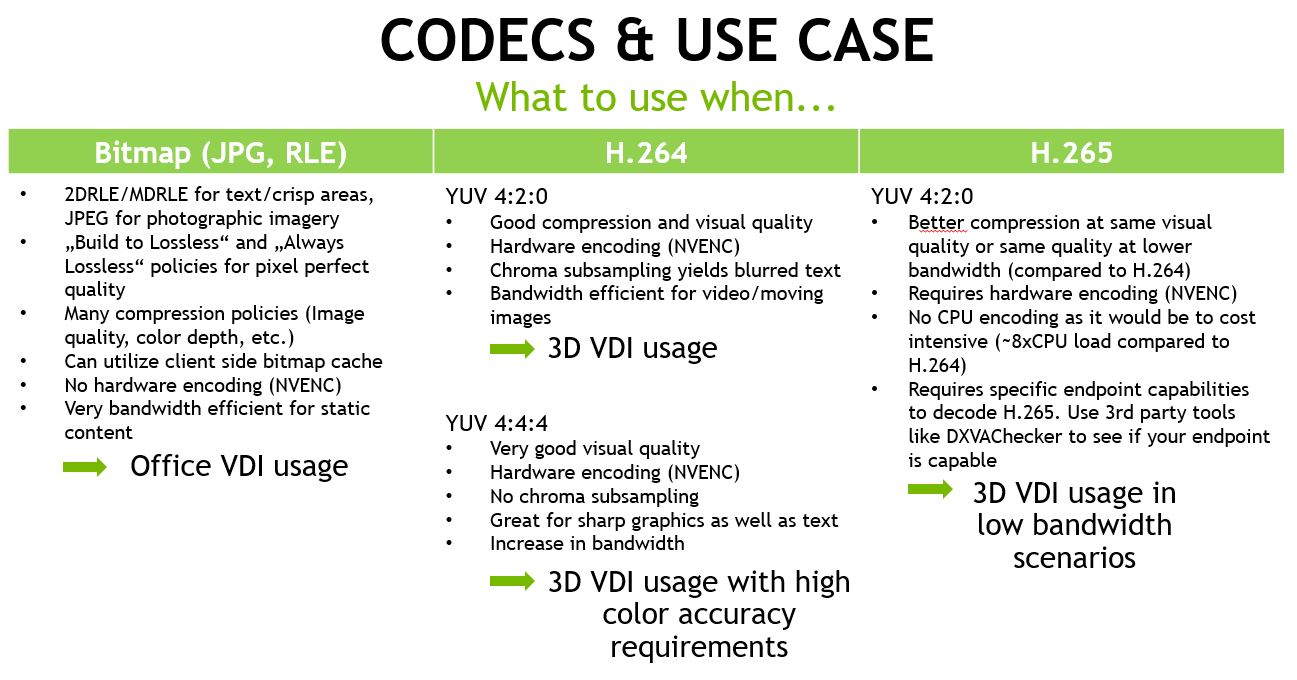

Let’s start with an overview and I will explain the use cases in more detail afterwards:

Bitmap Remoting

Bitmap remoting @Citrix is based on JPG compression and RLE (Run Length Encoding) which is a Citrix specific invention. See here if you want to read more details about RLE. Bitmap remoting, also known as “Thinwire” for many years is a very bandwidth efficient remoting protocol for static content. In addition, the Visual Quality is close to the original image and we even have options to further improve the image quality with Lossless policies to gain “Pixel perfect” quality sometimes necessary in verticals like Medical.

That said, it is a good choice for Office related use cases like knowledge worker VDI or XenApp uses cases.

WHY?

Most of the time the users are working with Office applications or ERP software where we don’t see much screen changes and therefore the bitmap remoting is very efficient.

But there are even more things to consider:

Image Quality

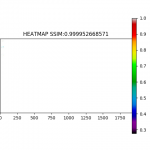

We don’t want to only use the “human eye” comparison between images to find out how close the image quality is to the original image. Therefore, we’re using a more objective method to measure this:

Structural Similarity Index (SSIM) is a perceptual metric that quantifies image quality degradation caused by processing such as data compression or by losses in data transmission. It is a full reference metric that requires two images from the same image capture— a reference image and a processed image

Endpoint

Most customers want to use a Thinclient instead of Fatclient for their end users. These Thinclients are often pretty old already or don’t even support hardware decoding (we will discuss this later). Bitmap remoting doesn’t need specific hardware at the endpoint and is not very resource consuming so it will run on every Thinclient available.

Encoding CPU load

As just mentioned for the endpoint, there is also only moderate CPU load necessary to do bitmap compression. But there is no option to offload the encoding to the GPU (NVENC).

Citrix Policy Set

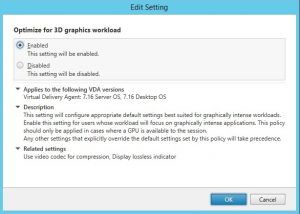

I would recommend to use the following policy settings to “force” Bitmap remoting:

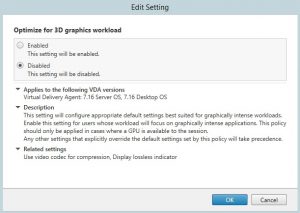

- Optimize for 3D graphics workload ->Disabled

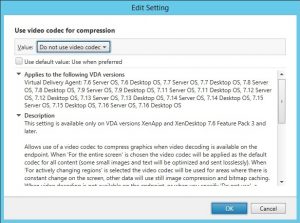

This policy is not really relevant here and can be disabled - Use video codec for compression ->Do not use video codec

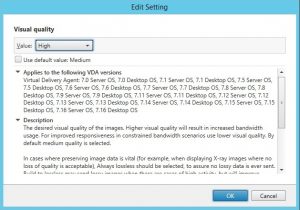

In order to force bitmap remoting we shouldn’t allow to use video codec - Visual Quality ->High

This policy should be configured depending your visual quality requirements. “High” is a good starting point and you may require “build to lossless” or “always lossless” for pixel perfect quality use cases as mentioned earlier

Video Codecs

Citrix now supports H.264 and H.265 as video codec. In addition there are variations of H.264: YUV420 and YUV444. For H.265 we currently only have YUV420. Comparison and differences will be explained in more detail in the other blogs of this series. Here I want to focus on when you should choose a video codec and …

…WHY?

as we have seen above, bitmap remoting works pretty well for static content but as soon as moving images or video playback comes into play, the situation is different. The higher the framerate required (30fps, 60fps) the more images need to be transferred with bitmap remoting and this heavily impacts the bandwidth required and the overall performance. This is exactly where the video codec makes sense.

Bandwidth

The video codec (H.264, H.265) encodes images by implementing less resolution for chroma information than for luma information, taking advantage of the human visual system’s lower acuity for color differences than for luminance. This allows smooth video playback or working with 3D models even with high framerate at low bandwidth requirements and is therefore also usable for WAN scenarios where users are working from home or at remote locations with low bandwidth connections.

Image Quality

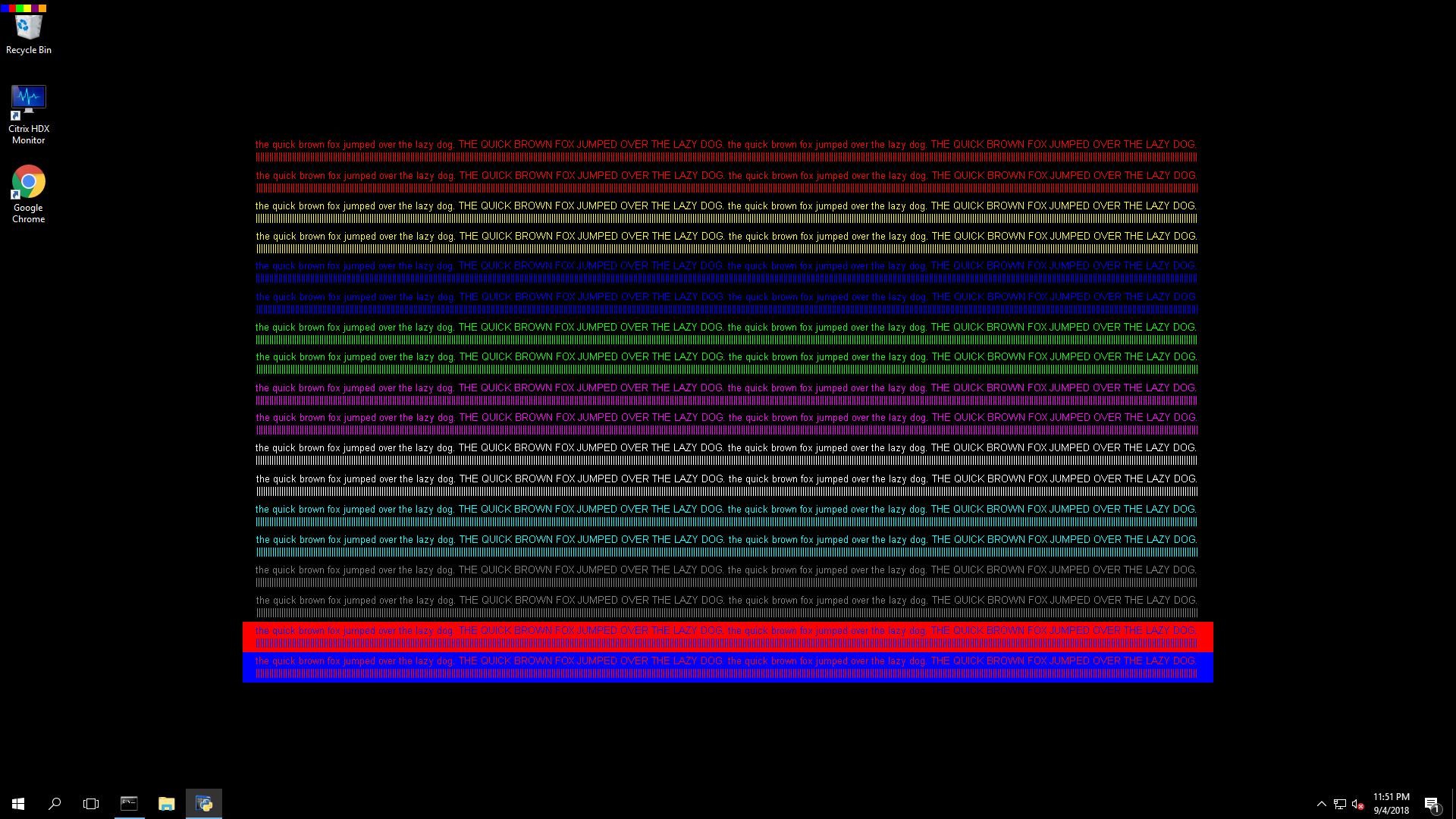

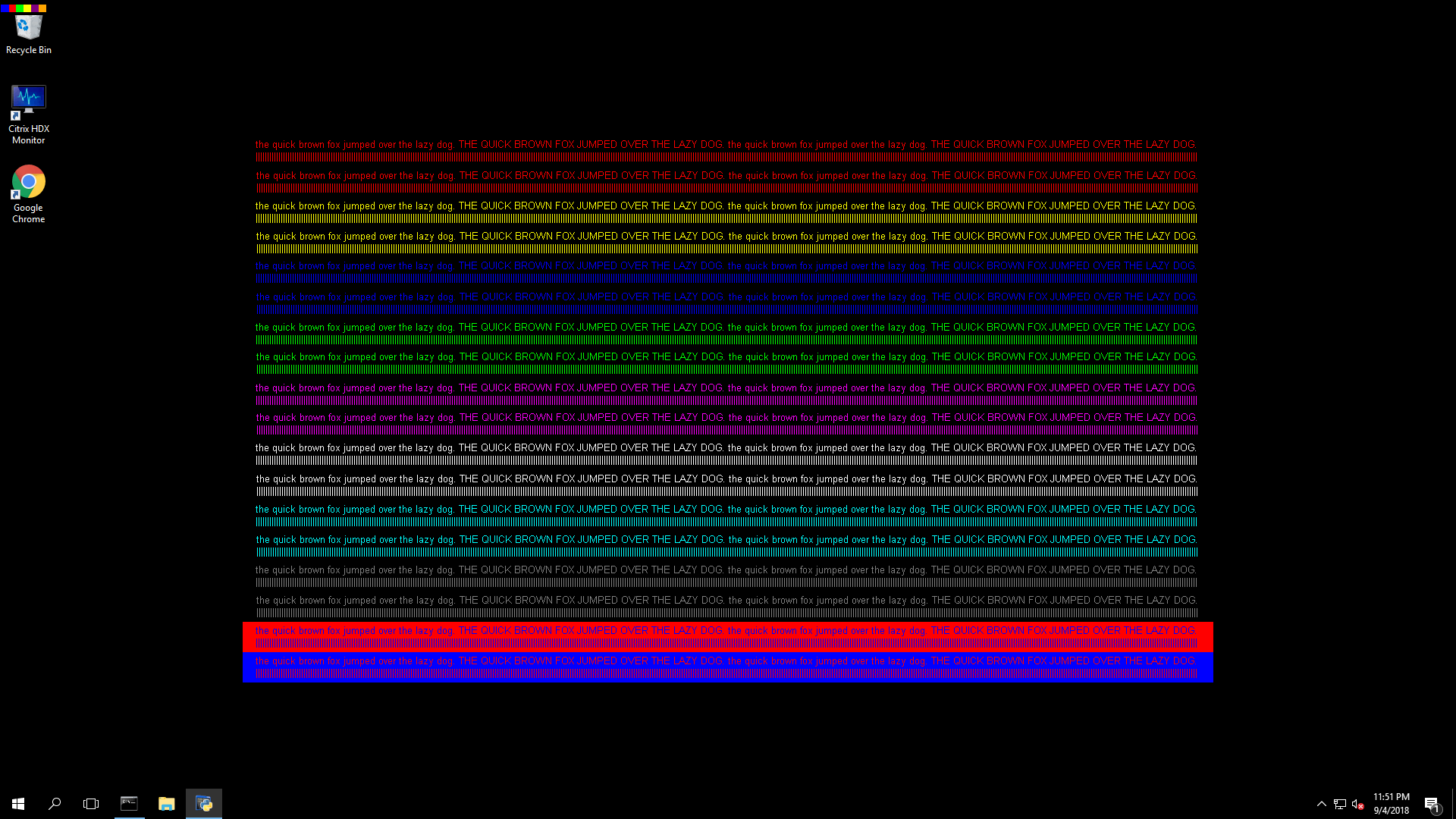

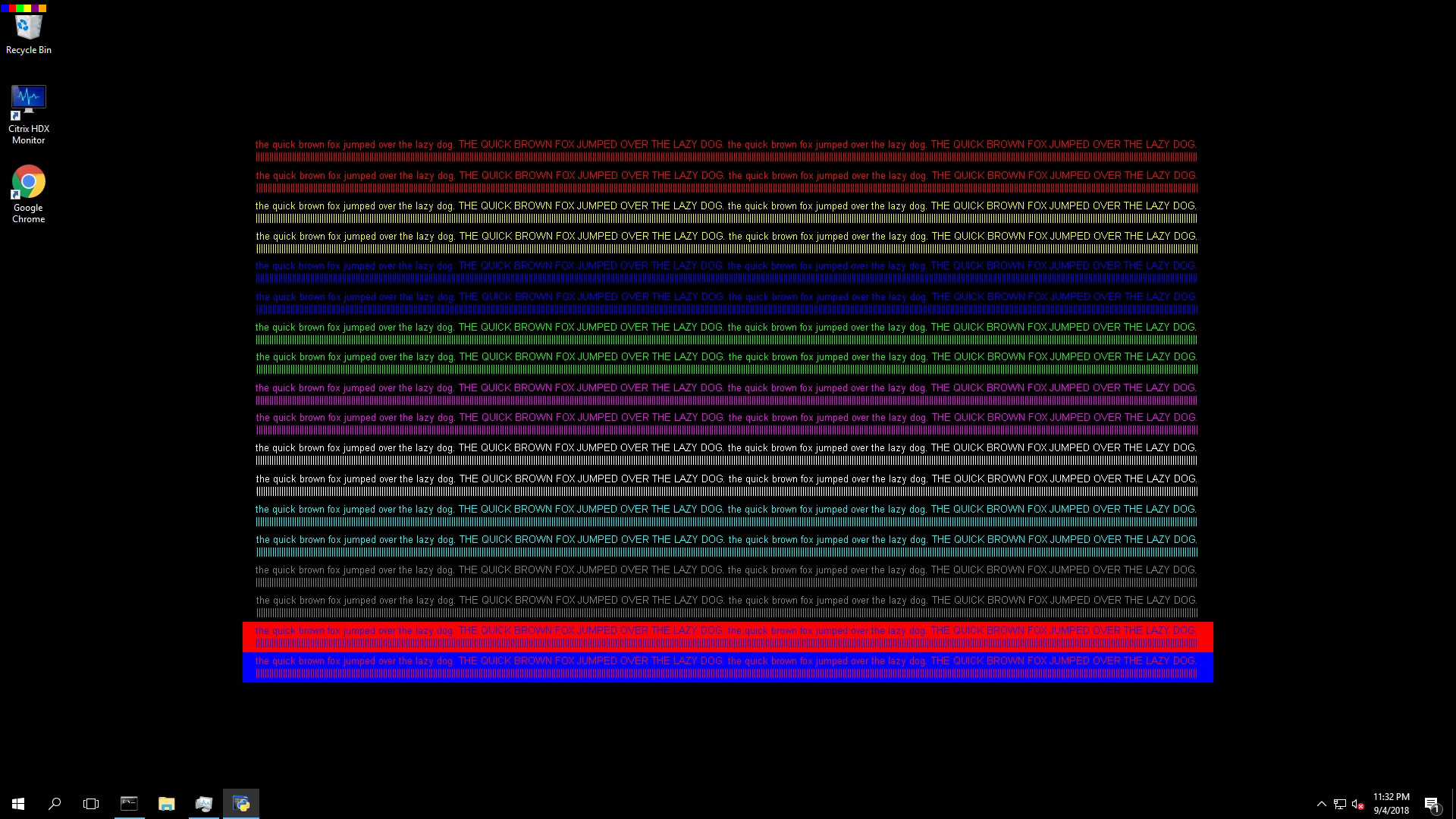

For video codecs with YUV420 implementation (Citrix default) the “chroma subsampling effect” can cause blurriness especially for text in specific constellations. This is not an issue for video playback of object movements but it can be disturbing in working with office applications as example. We will also show you the behavior in the same reference image and SSIM meaturement we did already for the bitmap remoting:

Here you should already see a huge difference in “human eye” comparison between the reference image and the captured image. In addition the SSIM heatmap shows a result of 83% accuracy. You should now better understand why the users may complain about test blurriness with YUV420.

Latency

As soon as we use a video codec like H.264 we need to encode the captured images server side and decode the stream on the endpoint. This is a quite “expensive” task and requires up to 1 vCPU with H.264 if we cannot encode with hardware. The good thing is, that Citrix supports hardware encoding (NVENC) with our Tesla GPUs so we can offload this task to specific ASICs on the GPU present for these tasks. Encoding in hardware is always faster compared to CPU based encoding and therefore we see a good reduction in latency. To give you an example you can calculate with 4ms for GPU encode compared to ~30ms with CPU.

Endpoint

Our endpoint needs to be capable to decode the video stream. So it needs to either have a hardware decoder or it requires a modern CPU with enough performance to decode the stream. Same applies here in terms of latency. Hardware decoding is preferred as it reduces the overall latency. So if we have a very old Thinclient it may happen that it is unable to cope with the decoding which leads to degraded end user experience.

Encoding CPU load

Citrix supports NVENC (NVIDIA Encoding) for the encoding in hardware. With offloading the task to the GPU we have a massive benefit in terms of encoding CPU load which is very low, even lower than with bitmap remoting.

Citrix Policy Set

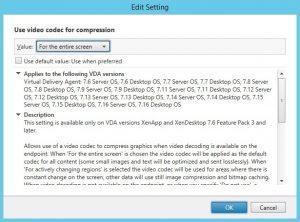

You should configure a few policies as shown here:

- Optimize for 3D graphics workload ->Enabled

Enable this policy to use NVFBC (Nvidia Direct FB access) and also NVENC (Nvidia Encoding). - Use video codec for compression -> For the entire screen

For NVENC to work even on older XenDesktop versions (7.12 – 7.16) it is necessary to use the entire screen policy. - Visual Quality ->High

It doesn’t avoid the chroma subsampling but High gives a pretty OK quality for most of the use cases. There is also not much difference in bandwidth consumption between Medium and High policy so that I would recommend “High” as a starting point. - Use hardware encoding ->Enabled (this is the Citrix default therefore I didn’t create a screenshot for this)

Use Case

In the meantime the usage of “H.264 YUV420 only” is decreasing. There are several reasons for this, the main reason is the “chroma subsampling effect” and the presence of better alternatives. With later Citrix versions starting with 7.17 we now have “mixed codecs” (bitmap and video) that reduce the use cases for H.264 entire screen to “3D VDI usage” where high color accuracy is not a mandatory requirement. I will explain the mixed codecs in detail in another blog post of this series.

If you would like to view the on demand recording of our GTC session on choosing the right protocol for your VDI environment , click here

Total Users : 131906

Total Users : 131906 Views Today : 49

Views Today : 49 Total views : 311486

Total views : 311486

It’s great to read your post about Bitmap and video codec citrix and with a clear explanation. thank you so much. regards